After the typical malicious email attack is launched, its first victim will be compromised in under 4 minutes. Now, emerging, predictive models may point to new ways to neutralize that threat.

Data science may soon be able to predict when the next email fraud attack will hit your company's inboxes. But why should we care?

After all, what's the value of being able to predict these assaults? Is an attack via email still even relevant in the age of Slack, Microsoft Teams and other business messaging apps?

Let's look at the numbers to find out. According to the Wall Street Journal, 76% of businesses suffered business email compromise (BEC) or other forms of email attack last year, and 42% report those attacks were successful.

Then there's human nature: 46% of employees say they would click on a potentially-malicious email because, in their words, "opening email on my work computer is safe." In fact, a typical email attack will snare its first victim in just under four minutes and can score as much as $130,000 or more per company.

So yeah. Not only does email remain our most important business communication and collaboration tool, but it's quickly becoming a prime attack vector for fraudsters seeking to circumvent your cyber-defenses by targeting sophisticated, socially-engineered messages to very human recipients.

But is it really possible to stop them by predicting their next move?

Predictive Reasoning

In part one of the Predicting Email Fraud series, we looked at breakthrough research findings that could soon enhance the Agari solutions organizations count on to protect themselves from email-based threats.

In that investigation, our researchers found that organizations receive a new malicious email at an average rate of one every six minutes, with 90% falling within an average 16-minute window.

That's an alarming attack frequency, to be sure. But what's really shocking is that this average is consistent across all organizations, from SMBs to global enterprises, regardless of industry.

To be clear, the specifics vary wildly between each organization. Each has its own unique attack peaks and valleys. But overall, they share that same average 6-minute interval between attacks.

The implications? Potentially enormous—especially if it can be used to formulate a predictive model that accurately projects when the next malicious email is likely to arrive.

Two-Minute Warning?

With that in mind, our research team developed a set of models and used time series analysis to test their ability to predict the arrival of the next incoming malicious email.

Specifically, these models each leveraged a different time lag, ranging between 2 and 6 minutes, so we could determine if the prior few minutes of incoming email activity would dictate the prediction for the next minute's activity.

These models worked surprisingly well—especially one with a 2-minute time lag.

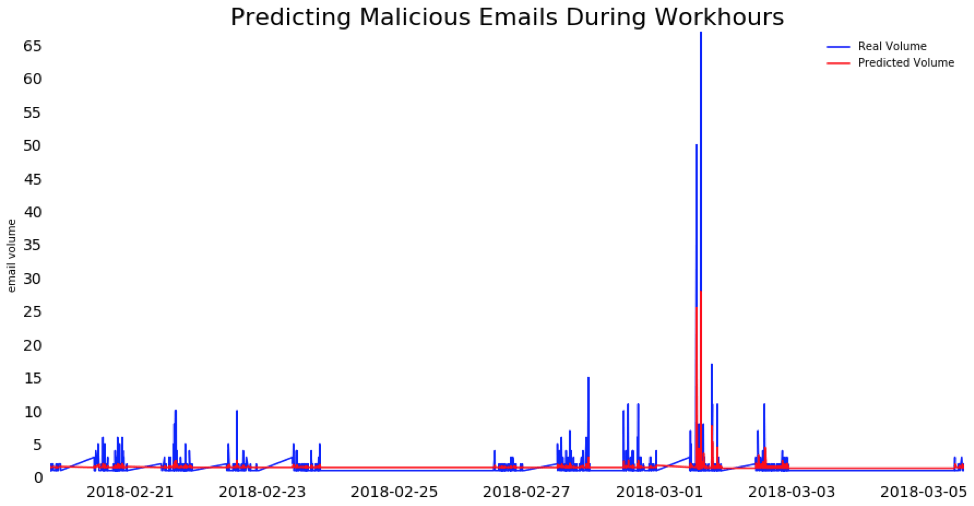

The chart above captures one company's rate of predicted and actual email attacks using a 2-minute time lag over a 45-day period.

In this model, every two minutes of incoming email activity were used to predict the next minute of activity. The red line represents predicted attacks, while the blue line represents actual attacks.

As you can see, this 2-minute time lag model anticipates spikes in new malicious emails with a significant degree of accuracy.

Indeed, while the distribution of attacks appears random, there is in fact an underlying pattern that this 2-minute model successfully anticipates. Specifically, that these malicious emails are coming in batches.

Surge Protection

Looking at the chart, it's clear that malicious emails arrive in clusters over hours and days, with spikes as volumes surge.

The 2-minute model does quite a good job of predicting these spikes because as the volume of malicious emails rises, it is likely to keep going up, in line with activity seen in the prior two minutes.

Likewise, volumes are likely to decrease as new attacks decelerate during the preceding two minutes. And while there isn't any consistency in the gaps between new batches of malicious email, the overall average is—wait for it—that same 6-minute interval.

Of course, while this 2-minute model seems to perform well, it also sparks new questions about the timing of these batches of malicious emails. Are these coordinated attacks on this company? Are they in response to news involving the company or its industry?

We know email fraud has gone up following sensational news, for instance. Reports about organizations raising capital also seem to inspire email fraudsters scheming to extract some of that money.

So, inquiring minds want to know: How can we improve predictive models to account for these kinds of contributing factors and further enhance the way we use artificial intelligence to prevent email fraud attacks from ever reaching recipients?

2022 Email Fraud & Identity Deception Trends

The Agari Cyber Intelligence Division analyzed trillions of emails and hundreds of millions of Internet domains to uncover the scope and impact of email fraud.